Understanding your data

Critical thresholds

How low must a LASS 8–11 subtest score be before the teacher should be concerned about the student’s performance? Put another way: what is the critical cut-off point or threshold that can be used when deciding whether or not a given student is ‘at risk’? Unfortunately, this is not a question that can be answered in a straightforward fashion, because much depends on other factors. These include: (a) the particular LASS 8–11 subtest undertaken, (b) whether the results of other LASS 8–11 subtests confirm or disconfirm the result being examined, and (c) the age of the student being tested.

Traditionally, a score which falls below an SAS of 85 (which is below one standard deviation below the mean) is by definition significantly below average and thus indicates an area of weakness, which requires some intervention. However, as stated at the start of this chapter, any test score is only an estimate of the student’s ability, based on their performance on a particular day. As there is some error in any test score, those test scores in the borderline range (i.e. just above SAS 85) could potentially represent ‘true scores’ that are within the ‘at risk’ range. Therefore, the LASS 8–11 report identifies SAS scores of 88–94 as being ‘Slightly below average’ and SAS scores of 75–87 as ‘Below average’. As such, action is recommended where SAS scores are in either of these ranges and the LASS 8–11 report will refer the tester to the Indications for Action table on the GL website, where appropriate. Where there is strong confirmation (e.g. a number of related subtests below an SAS of 88) then the assessor can be convinced that concern is appropriate.

Where a student is scoring below an SAS of 75 on any subtest (which is near or below two standard deviations below the mean), this generally indicates a serious difficulty and should always be treated as diagnostically significant, and usually this will be a strong indication that a student requires intervention. The LASS 8–11 report identifies SAS scores below 75 as being ‘Very low’ and will refer the tester to the Indications for Action table on the GL website. Again, where there is strong confirmation (e.g. a number of related subtests below an SAS of 75) then the assessor can be even more confident about the diagnosis.

However, it should not be forgotten that LASS 8–11 is also a profiling system, so when making interpretations of results it is important to consider the student’s overall profile. For example, an SAS of 95 for reading or spelling would not normally give particular cause for concern. But if the student in question had an SAS of 120 or more on one or both of the reasoning subtests, there would be a significant discrepancy between ability and attainment, which would give cause for concern.

It should also be noted that Single word reading is the only subtest in the LASS 8–11 suite for which scores are not distributed in a normal curve. In fact, there is a significant negative skew, indicating that most students will achieve a maximum or near-maximum performance (in statistical terms this is sometimes referred to as a ‘ceiling effect’). Single word reading does not have sufficient sensitivity to discriminate amongst students within the average range, and so it should be confined to use with students who are significantly behind in reading development, either to determine their attainment level or evaluate progress.

Understanding profiles

When considering LASS 8–11 results, it is important to bear in mind that it is not one test which is being interpreted, but the performance of a student on a number of related subtests. This is bound to be a more complex matter than single test interpretation. Hence the normative information (about how a student is performing relative to other students of that age) must be considered together with the ipsative information (about how that student is performing in certain areas relative to that same student’s performance in other areas). The pattern or profile of strengths and weaknesses is crucial.

However, it is not legitimate to average a student’s performance across all subtests in order to obtain a single overall measure of ability. This is because the subtests in LASS 8–11 are measuring very different areas of cognitive skill and attainment.

On the other hand, where scores in conceptually similar areas are numerically similar, it is sometimes useful to average them. For example, if scores on the two memory subtests (Sea creatures and Mobile phone) were at similar levels, it would be acceptable to refer to the student’s memory skills overall, rather than distinguishing between the two types of memory being assessed in LASS 8–11 (i.e. visual memory and auditory sequential memory). On the same basis, if scores on the two phonological subtests (Funny words / Non-words and Word chopping / Segments) were at similar levels, it would be acceptable to refer to the student’s phonological skills overall. Note that this applies only to conceptually similar areas and where scores are numerically similar. It would not be legitimate to average scores across conceptually dissimilar subtests (e.g. Non-verbal reasoning and Funny words / Non-words). When scores are dissimilar, this indicates a differential pattern of strengths and/or weaknesses, which will be important in interpretation. In such cases it will be essential to consider the scores separately rather than averaging them. For example, if Sea creatures and Mobile phone produce different results, this will usually indicate that one type of memory is stronger or better developed (or perhaps weaker or less well developed) than the other. This information will have implications for both interpretation and teaching.

Teachers should also remember that the computer is not all-seeing, all-knowing – nor is it infallible. For example, the computer cannot be aware of the demeanour and state of the student at the time of testing. Most students find the LASS 8–11 subtests interesting and show a high level of involvement in the tasks. In such cases the teacher can have confidence in the results produced. Occasionally, however, a few students do not show such interest or engagement and, in these cases, the results must be interpreted with more caution. This is particularly the case where a student was unwell at the time of assessment or had some anxieties about the assessment. Teachers should therefore be alert to these possibilities, especially when results run counter to expectations.

Many LASS 8–11 reports display a complex pattern of ‘highs’ and ‘lows’ that at first sight appears quite puzzling. When tackling such profiles, it is particularly important to bear in mind any extraneous factors that might have affected the student’s performance. Examine the report to see on what days different subtests were done. Motivation, ill health (actual or imminent) and impatience are often causes of a student under-performing, or the student may simply have misunderstood the task (e.g. assuming that they have to do a subtest as quickly as possible when in fact it is accuracy which is most important). Very occasionally it may be found in such cases that the student was simply not taking the test seriously. The fundamental rule of thumb is: if the teacher is not confident about any particular result, then the safest course of action is to repeat the subtest(s) in question after first checking that the student does understand the task(s), is not unwell, and has the right frame of mind to attempt the activities to the best of their abilities.

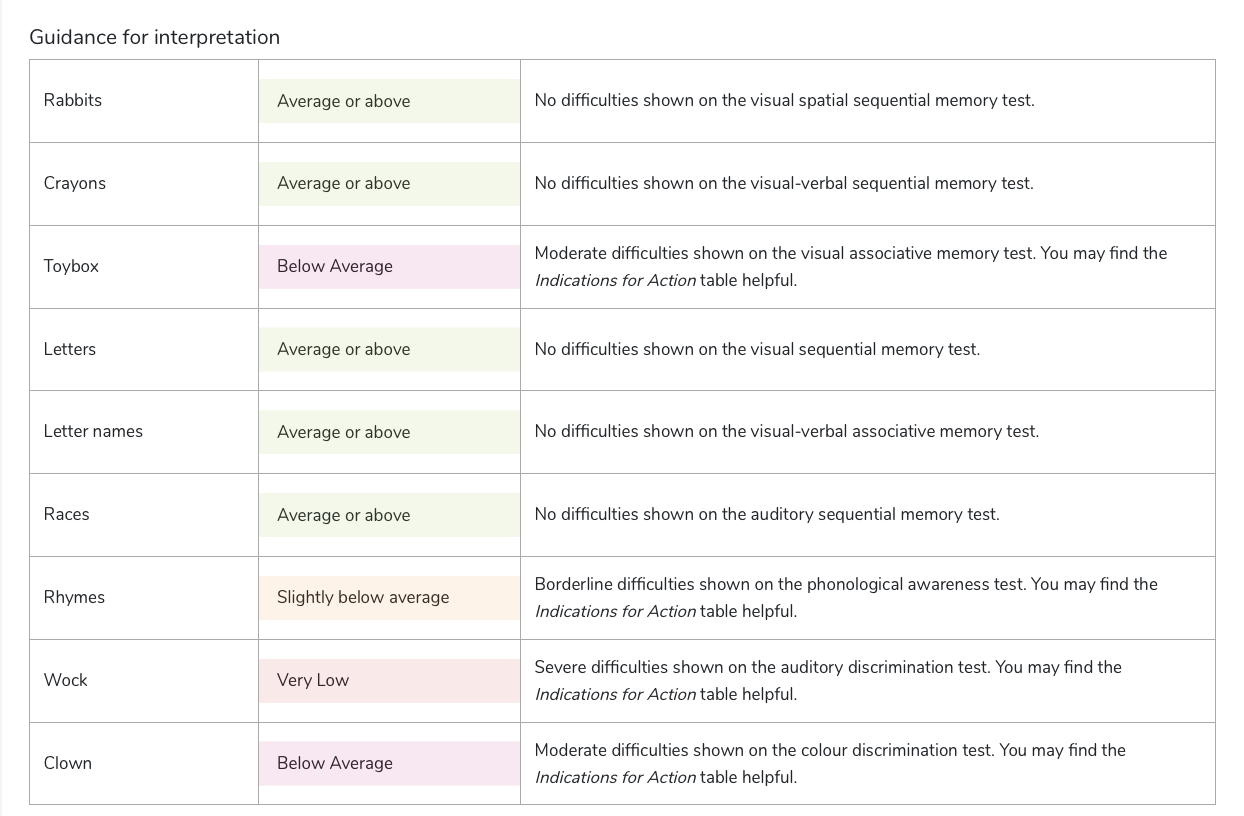

Guidance for interpretation table

Figure 28. Example of the Guidance for Interpretation section of the report

The Guidance for Interpretation table on the report provides enhanced guidance for interpreting each student’s results. Match the guidance to the LASS 8–11 Indications for Action Table, found on the Downloads page.

Interpreting the results from LASS 8–11 requires interpretation of the overall profile, and not just consideration of each individual subtest separately. Please see the Case Studies chapter for further guidance on interpreting the whole profile.

Must students be labelled?

Labels for different special educational needs (especially the label ‘dyslexia’) have been controversial for some years. The 1981 Education Act, which encouraged a non-labelling approach to special educational needs, was then superseded by the 1993 Education Act and the Code of Practice for the Identification and Assessment of Special Educational Needs (DfE, 1994). The latter embodies a fairly broad labelling of special educational needs categories, including the category ‘Specific Learning Difficulties (Dyslexia)’ [Code of Practice, 3:60]. The 1996 Education Act consolidated the provisions of previous Acts, in particular the 1993 Act. However, the 1994 Code of Practice was superseded by the 2001 SEN Code of Practice, which again moved away from use of labels and focused instead on areas of need and their impact on learning (DfES, 2001). The latest SEND Code of Practice (DfE, 2014) reiterates that ‘The purpose of identification is to work out what action the school needs to take, not to fit a pupil into a category… The support provided to an individual should always be based on a full understanding of their particular strengths and needs and seek to address them all using well-evidenced interventions targeted at their areas of difficulty’ [SEND Code of Practice, 2014,].

Many teachers are justifiably worried that labelling a student – especially at an early age – is dangerous and can become a ‘self-fulfilling prophecy’. Fortunately, the LASS 8–11 approach does not demand that students be labelled, instead it promotes the awareness of students’ individual learning abilities and encourages taking these into account when teaching. Since the LASS 8–11 report indicates a student’s cognitive strengths as well as limitations, it gives the teacher important insights into their learning styles. In turn, this provides essential pointers for curriculum development, for differentiation within the classroom, and for more appropriate teaching techniques. Hence it is not necessary to use labels such as ‘dyslexic’ when describing a student assessed with LASS 8–11, even though parents may press for such labels.

By identifying cognitive strengths and weaknesses it is easier for the teacher to differentiate and structure the student’s learning experience in order to maximise success and avoid failure. By appropriate early screening (e.g. with CoPS or LASS 8–11) the hope is that students who are likely to fail and who might subsequently be labelled ‘dyslexic’, never reach that stage because their problems are identified and tackled sufficiently early. This is not to suggest that dyslexia can be ‘cured’, only that early identification facilitates a much more effective educational response to the condition.